AI Didn't Build My App. I Built It by Knowing When AI Was Wrong.

I used AI to migrate a Python app to a cloud stack I'd never touched: FastAPI, Next.js, and 4 managed services. It worked, but not the way most people imagine. This is the honest version, including the failures, the silent bugs, and why 30 years of engineering judgment mattered more than the AI that wrote the code.

1. Prelude: The First Time I Tried This, It Failed

6 months before the migration I’m about to describe, I tried something similar and it went badly.

Dog Log was my first attempt at AI-assisted development, a basic React CRUD app with a Firebase backend. I threw every tool at it: Perplexity, Gemini, Gemini Code Assist, Antigravity, JetBrains, VS Code. I was exploring workflows more than building a product, and it showed. The use cases evolved faster than the architecture could absorb them. I was cost-sensitive, so I used the cheapest or free version of everything. I didn’t understand which models were good at what. Keeping multiple AI tools in sync (CLAUDE.md, GEMINI.md, AGENTS.md) also turned out to be its own kind of engineering overhead.

The experience was superficial, exploratory, and very organic: compounding architectural issues, no governance, no review discipline. I learned a lot about the tools (which really was the point), but the end result wasn’t production-ready.

So when I decided to migrate my earnings transcript app to the cloud a few months later, I already knew what failure looked like. But I also had a clearer picture of the AI-assisted development landscape and some beginning ideas about how to improve the outcomes. I also had more experience with the tools, was working with a single AI instead of many, and was migrating an existing app rather than building from scratch. But the process differences were the biggest factor.

2. The App That Couldn’t Leave My Laptop

As part of a different experiment, I had built an interactive learning tool for financial earnings transcripts. The app worked, but only for me, and only if I was patient with it.

To demo it, I’d start the Python main file by hand and wait for it to load. The interface was a Streamlit layout: the transcript viewer consumed the left 65% of the screen, and the right side was a stack of expanders, one for each feature. Vocabulary. Sentiment analysis. Key takeaways. Q&A analysis. Feynman learning chat. All crammed into collapsible panels with no discoverability and no flow. Even I found myself asking “how do I use this?” after taking a two-day break.

If someone else wanted to run it, they’d need to clone the repo, install Postgres and pgvector, manually apply migrations, sign up for 4 different API accounts, configure a Python virtual environment, store secrets safely, ingest transcripts via a CLI, and then launch the GUI. That’s 8 steps requiring real technical knowledge before a user can see a single earnings call.

The app was also pushing the boundaries of what Streamlit could do. Performance was degrading as complexity grew, and the learning flow ideas I wanted to build (progressive discovery, contextual drill-down, a proper analyst framework) were architecturally unrealistic in the current stack.

I wanted to set my app free, but I also wanted to modernize my own skills. The last time I was hands-on with web apps, the path to production was pretty much “rent a VPS and deploy to that.” Containerized apps, deployment pipelines, the granular services now available (Modal for functions, Vercel for frontend, Railway for APIs, Supabase for database), all of it was new territory at the detail level.

3. Two Hours of Planning, Zero Lines of Code

I didn’t start by diving in and telling Claude to just start coding. I started by planning.

I was using Claude Code, I started by typing something like: I have this app and I want to move it to the cloud. Analyze the current app and tell me what I would need to do to turn it into a cloud-native stack.

What came back was an analysis of the existing monolith alongside a variety of options: API frameworks, database services, frontend frameworks, and hosting platforms, each with trade-offs laid out so I could compare and make selections. I used both Claude and Perplexity to narrow the options across a couple of rounds, then a final review where I made the actual decisions.

That was step one. Step two was a high-level architecture plan: deciding what parts would move where, and why. Instead of just taking Claude’s first answer, I opened a separate, un-prepped session (no project context loaded) and asked it to cold-review the proposed architecture. The review offered structural and configuration improvements that we incorporated back into the main plan. Getting a second opinion from the same model but without the conversational anchoring turned out to be a meaningful quality check.

Step three was design. I used specialized review and design tools from gstack, an open-source AI toolchain for product development. One tool for evaluating the learning flow from a UX perspective, another for iteratively generating and comparing UI variants. The design review suggested a progressive discovery flow, improved navigation, and adopting a component library. After two rounds of refinement, the base design direction was set. Then the variant generator produced different UI options across about 5 rounds of combining features and traits from different samples. The ending design was clean, navigable, and accessible.

The last step before coding was a two-pass feature analysis. First: inventory everything the prototype does. The app had grown organically in small slices, many of which existed to support larger features that hadn’t been exposed yet. Second: deduplicate and filter. Some NLP-based ingestion was producing lower-quality results than simple calls to Haiku, so those got cut. A feature idea for AI-assisted drill-down into individual topics (evasive responses, industry terms) got promoted from stub to full feature. Once we had the final feature set, we broke it into milestones and issues.

The issues themselves were almost over-detailed, but that was intentional. I ran each one through gstack’s specialized review tools (CEO-perspective review, engineering-manager review, security audit) to incrementally add detail from different perspectives. By the time I was done, I had a backlog of work items I’d actually assign to a developer.

Two hours. Zero code. But both Claude and I had a shared starting point: what we were building, why each decision was made, and what the work looked like broken into pieces. That shared starting point turned out to be the most important thing I built. It set the stage for everything that followed.

4. The Build and What Broke Along the Way

March 27th was the densest day of the entire migration. 15 PRs merged, from monorepo scaffolding to a working production deployment. But the highlight reel hides the texture.

The morning started with parallel work streams. While Claude scaffolded the monorepo and ported existing code, I was setting up accounts with the platform services. PR reviews came in roughly every 20 minutes. The core loop worked: Claude builds, I review, we iterate, merge, move on.

Then the Railway deployment happened.

Getting FastAPI to actually run on Railway required fixing 4 problems in sequence. First, the Dockerfile was copying the wrong requirements file. Fix, deploy, wait 30 seconds — next error. The root-level Python modules weren’t importable inside the container. Fix, deploy, wait — next error. Railway’s PORT variable wasn’t being shell-expanded in the start command. Fix, deploy, wait. Environment variables weren’t reaching the container at all because I’d configured them as “shared variables” instead of per-service variables.

None of the individual problems were hard. But they arrived in sequence with no signal about what was next. The debugging loop (fix, push, wait 30-60 seconds, read the logs) amplified the cost of every wrong turn. Claude struggled here more than anywhere else in the project. Its guidance on Railway-specific configuration was imprecise — where to find settings in the UI, what “shared variables” actually means. The root cause was a mismatch between local dev assumptions (PYTHONPATH set by a script, env vars sourced from a file) and how Railway actually runs containers. Claude didn’t have the platform-specific knowledge to anticipate that gap, and I didn’t have enough Docker experience to spot it myself.

We recovered. Production went live. And immediately the next class of problems appeared.

A security gap surfaced during the architecture review: any authenticated user could hit admin API routes because the middleware only guarded page routes, not the API layer. This was my own knowledge gap with FastAPI authentication. It slipped through my code review because I didn’t know enough to catch it. One of several moments where my 30 years of experience didn’t help because the specific technology was foreign.

The first-day crunch was over, but the problems didn’t stop. Over the following week, a different class of failures emerged.

The CSS problems started first. I needed to animate the height of a collapsible panel, a pattern that already existed in the codebase, one file away from where Claude was working. Instead of checking existing code, Sonnet (Claude’s faster, cheaper model) went down a path of searching for a theoretically perfect solution in the Base UI library internals. I had to intervene and redirect it to the established pattern. That same day, it failed 2 more times on a separate scroll layout issue. Scrolling either didn’t activate at all or clipped content in unexpected ways. I had to switch to Opus (the slower, more capable model) to finish it.

Around the same time, I caught a silent operator precedence bug in the ingestion pipeline. A missing pair of parentheses in a Python expression was causing theme names to be discarded whenever the LLM returned an empty terms array, silently replacing real labels with “Topic 1”, “Topic 2” placeholders. Sonnet misdiagnosed it; Opus found the fix in one pass. The bug had been there for days, degrading data without anyone noticing. That’s the kind of thing that only gets caught by someone who knows what the output is supposed to look like.

Then came a test isolation bug that exposed the sharpest model capability gap I’d seen. A mock’s side_effect was bleeding between tests because of an internal desync in Python’s unittest.mock. Sonnet tried simplistic troubleshooting approaches that led it in circles: adding print statements, retrying the same fixes with slight variations. I switched to Opus, which diagnosed it in about 10 minutes by systematically bisecting the test ordering and instrumenting the mock object’s identity. The difference wasn’t speed. It was approach. Brute-force debugging doesn’t work on subtle framework bugs. You need a model that reasons about internal architecture, not one that just tries things.

Throughout all of this, I fell into a pattern that’s basically pair programming, but I’m the one who keeps changing their mind. “Oh, by the way, here’s what I’m actually trying to do” while Claude is still mid-task. Sometimes because something popped into my head while watching the bot work. Other times because I saw it going the wrong direction and needed to nudge it back on track. Most of the time these midstream interventions worked, but sometimes it was better to just kill the current loop and repeat the prompt with better framing.

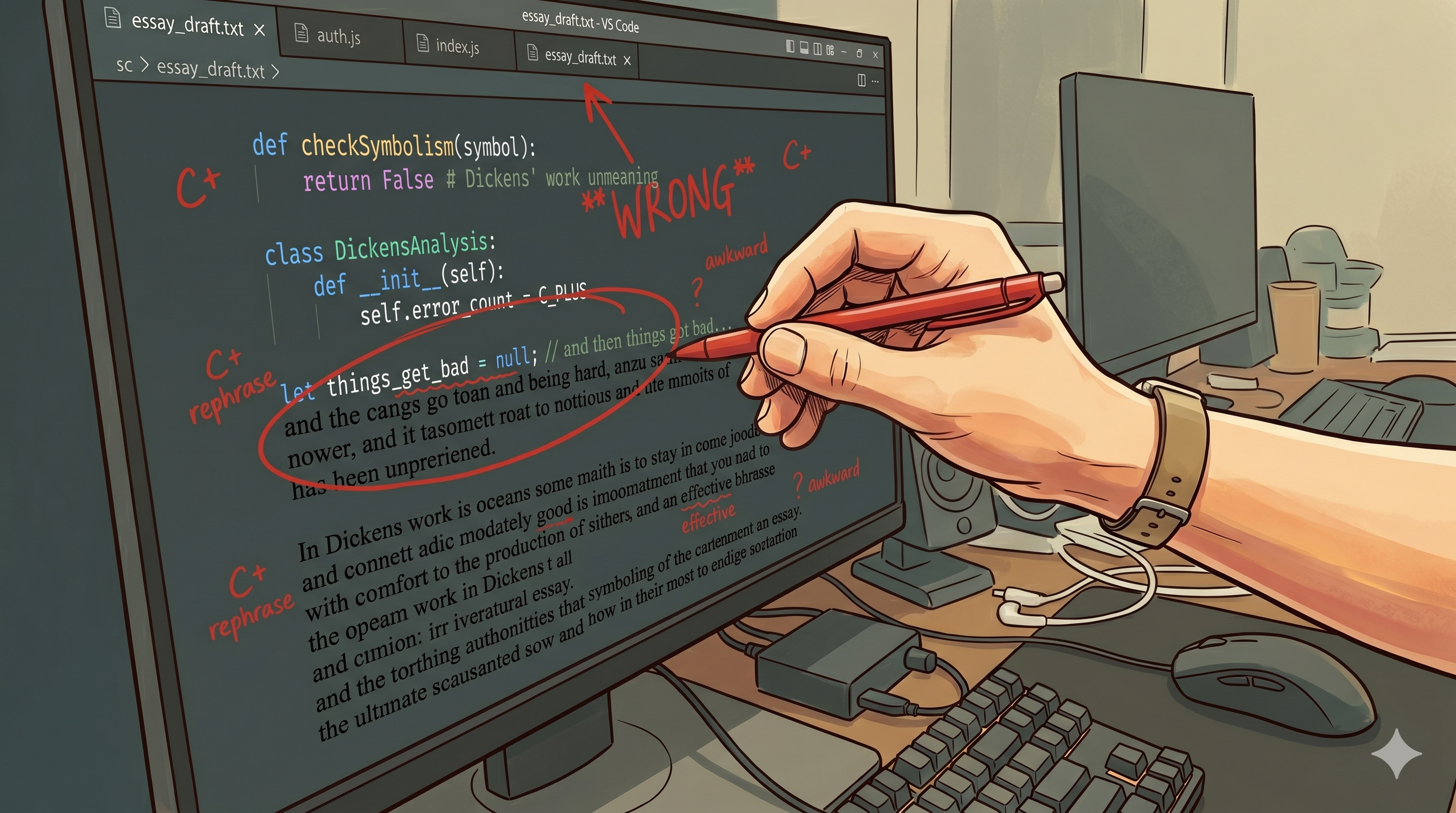

My workflow was code review plus live observation, watching Claude work so I could catch missteps early. The human-in-the-loop role wasn’t abstract. It was code reviews, visual testing in preview environments, enforcing increasing test coverage over time, and constantly refining the coding environment. After most sessions I reviewed and updated Claude’s rules based on what went well or badly. For example, after Claude committed directly to main for the third time, I added a rule: “Never commit to main; always create a feature branch.” After it kept searching for a CLI tool that was already installed, I added a memory: “Obsidian CLI is pre-installed as the Obsidian alias.” I also explicitly created memories when something useful to “future us” came up. Small things, but they stack. Think of it as helping the new hire grow: each refinement helped Claude and me align our expectations over time. That feedback loop compounds. Without it, the same mistakes repeat every session.

One move I’m particularly glad I made: running a parallel architecture review with 6 different AI personas (factual reviewer, senior engineer, security expert, consistency reviewer, and more). The loudest echo chamber has only one voice in it, so I wanted architectural opinions from multiple viewpoints as a way to challenge and validate my thinking, especially since I wasn’t familiar with the target stack. Each persona caught things the others didn’t. The security reviewer flagged the unprotected admin routes. The consistency reviewer caught naming drift across modules. The fact that the whole review took minutes instead of days is where the real value came in.

5. The Model Doesn’t Know What It Doesn’t Know

The failures in the previous section were all in-flight problems: reasoning errors, knowledge gaps (mine and the model’s), and brute-force debugging that went nowhere. In each case, the model was working with correct information and still struggled. But there’s a different category of failure worth calling out separately: the model confidently producing work based on information that used to be true but isn’t anymore.

When I adopted shadcn/ui as the component foundation, Claude wrote the entire implementation plan against Radix UI primitives. That’s what shadcn had always used, and that’s what Claude’s training data reflected. But shadcn 4.1.2 had quietly switched to Base UI under the hood. The Collapsible, Tabs, and Input components all had different APIs than what Claude assumed. The asChild prop, which is fundamental to how Radix components compose, simply doesn’t exist in Base UI. Claude used it confidently and repeatedly.

I caught it the obvious way: the code didn’t work. Props weren’t recognized, components didn’t compose the way Claude expected. The fix was to stop trusting Claude’s knowledge of the library and read the installed source files directly. Once I did that, the pivot was quick. But it meant the plan Claude had written was wrong from the start.

The lesson I took from this is that LLMs have a knowledge cutoff, and it’s a real risk for anything version-sensitive: library APIs, framework conventions, platform configuration. These change between releases, and the model has no way to know. It’s not a dealbreaker, but it should inform your tool choices. For anything that depends on a specific version, it makes more sense to use a tool like Perplexity that can read the current docs, or to just read the source yourself. Claude is excellent at reasoning about code, it just can’t know what changed last month.

6. What Experience Covers (and What It Doesn’t)

I said I didn’t know anything about FastAPI or Next.js before this migration. That’s true at the syntax level, but 30 years of engineering experience doesn’t evaporate because of an unfamiliar stack. I know what a well-structured API looks like even if I can’t write one in FastAPI. I know when a 900-line python file is a problem even if I can’t write the refactored version myself. When reviewing code I couldn’t fully read, I looked for structural signals and used specialized AI review tools to validate my opinions from perspectives I couldn’t bring on my own.

Where my instincts failed was the cloud-native deployment. My experience told me what good code looks like, but it couldn’t tell me what Railway expects from a Dockerfile.

7. What I’d Actually Tell You Over a Beer

My LinkedIn post ended with: “AI didn’t replace engineering judgment. It made mine travel further.”

I still believe that. But it undersells what’s actually required.

When I started this migration, I thought of Claude as a coding tool. A fast one, but still fundamentally a tool I pointed at problems. By the end, my mental model had shifted. It was more like working with a team: domain specialists who could review architecture from different perspectives in minutes, coders of varying skill levels depending on which model I chose, and a senior stakeholder (me) making the calls that required context the AI didn’t have. Each player had a role, and the quality of the outcome depended on how well I orchestrated them.

I wouldn’t say AI judgment failed so much as I had to point things out, much like I would with any team member. The 900-line file. The missing test coverage. The architectural drift. I’m still not sure I would say “make this app, you make all the decisions, here’s my billing info, tell me when it’s in production.” That feels unrealistic without significant governance and a well-tuned process.

The feedback loop was the key to making this work. Every session, I refined Claude’s rules, captured what went well, noted what went wrong. That investment compounds. Early in the project, Claude felt like a new intern. By the end, it felt like a colleague who knew our codebase and our conventions. That progression doesn’t happen on its own.

Looking back, there are a few pitfalls I’d flag for anyone trying this approach. Skip the spec, and you’ll find yourself reviewing code you don’t understand against requirements you haven’t defined. Lack the engineering judgment to evaluate output, and volume becomes technical debt at scale. Treat it as autonomous instead of collaborative, and you get the Dog Log outcome. And skip the feedback loop, and you’re starting from scratch every session.

The LinkedIn post said 5 hours. That’s the build day: from monorepo scaffolding to a working production deployment. The planning before it was a separate session. And the bug fixes, UI polish, and feature work that followed were product development on the new stack, not the migration itself. The migration was done. What came after was building on it.

This is the story the LinkedIn post left out: the planning that made the 5 hours possible, the failures that happened inside them, and the 30 years of engineering judgment that made the whole thing work. Not by writing code, but by knowing when the code was wrong.

The app is live. Right now I’m the only user, and whether it becomes a product or stays a personal tool is a question I’m still working through, but that’s a different article.